The landscape of digital information retrieval is undergoing a fundamental shift as Google integrates advanced generative artificial intelligence into its core visual search tools. In a significant update to its "Circle to Search" and "Google Lens" features, the technology giant has introduced the ability for its systems to identify and analyze multiple objects within a single image simultaneously. This advancement marks a departure from the traditional one-item-at-a-time visual search model, allowing users to deconstruct complex scenes—such as a fully furnished room or a multi-layered fashion ensemble—into individual, searchable components with a single gesture. By leveraging the multimodal capabilities of its Gemini models, Google aims to transform the camera from a simple recording device into an intelligent interface capable of understanding the "why" behind a user’s query.

The Evolution of Visual Intelligence: A Chronology of Google Lens

The journey toward simultaneous multi-object recognition has been nearly a decade in the making. Google Lens was first introduced in 2017 as a pixel-exclusive feature, primarily designed to identify landmarks, plants, and business cards. At its inception, the technology relied on computer vision models that were highly specialized but limited in scope. Over the following years, the service expanded to all Android and iOS devices, integrating with Google Photos and the primary Google Search app.

A pivotal moment occurred in 2022 with the introduction of "Multisearch," which allowed users to combine images and text in a single query—for instance, searching for a patterned shirt and adding the text "in blue." However, even with these advancements, the system remained largely focused on a single primary subject within the frame. The latest update, powered by the Gemini era of AI, represents the third major phase of visual search: the move from object identification to scene comprehension. This timeline reflects a broader industry trend where search engines are moving away from literal keyword matching toward semantic and visual understanding.

Technical Architecture: The Brain and the Library

To understand how Google’s AI now processes complex visual queries, it is necessary to examine the interplay between the large language model (LLM) and the search index. Dounia Berrada, Senior Engineering Director for Google Search, describes the system as a partnership between a "brain" and a "library." In this analogy, the Gemini model serves as the brain, possessing the cognitive ability to "see" and reason about the contents of an image. The visual search backend serves as the library, containing a massive repository of billions of indexed web results.

The breakthrough in the current update is a process known as the "fan-out" technique. When a user activates Circle to Search on an Android device or uploads a photo to Lens, the Gemini model performs multi-object reasoning. It does not merely look for the most prominent item; it identifies all relevant entities within the frame. For example, in a photo of a garden, the model recognizes the specific species of various flowers, the type of mulch used, and the brand of the garden tools visible in the background.

Once these entities are identified, the system executes the fan-out: it triggers multiple, parallel searches for every identified object at once. Instead of the user having to crop and re-search for each item individually, the AI aggregates the data from these concurrent searches. It then synthesizes the information into a cohesive, easy-to-read response that provides context, such as care instructions for the plants or retail links for the tools, all within seconds.

Market Context and the Rise of Multimodal Search

Google’s push into advanced visual search comes at a time when consumer behavior is increasingly leaning toward visual-first platforms. According to industry data, nearly 36% of American consumers have used visual search for shopping, and among Gen Z and Millennial demographics, that number is significantly higher. Platforms like Pinterest and Instagram have long leveraged visual discovery to drive e-commerce, putting pressure on traditional search engines to provide more intuitive, image-based tools.

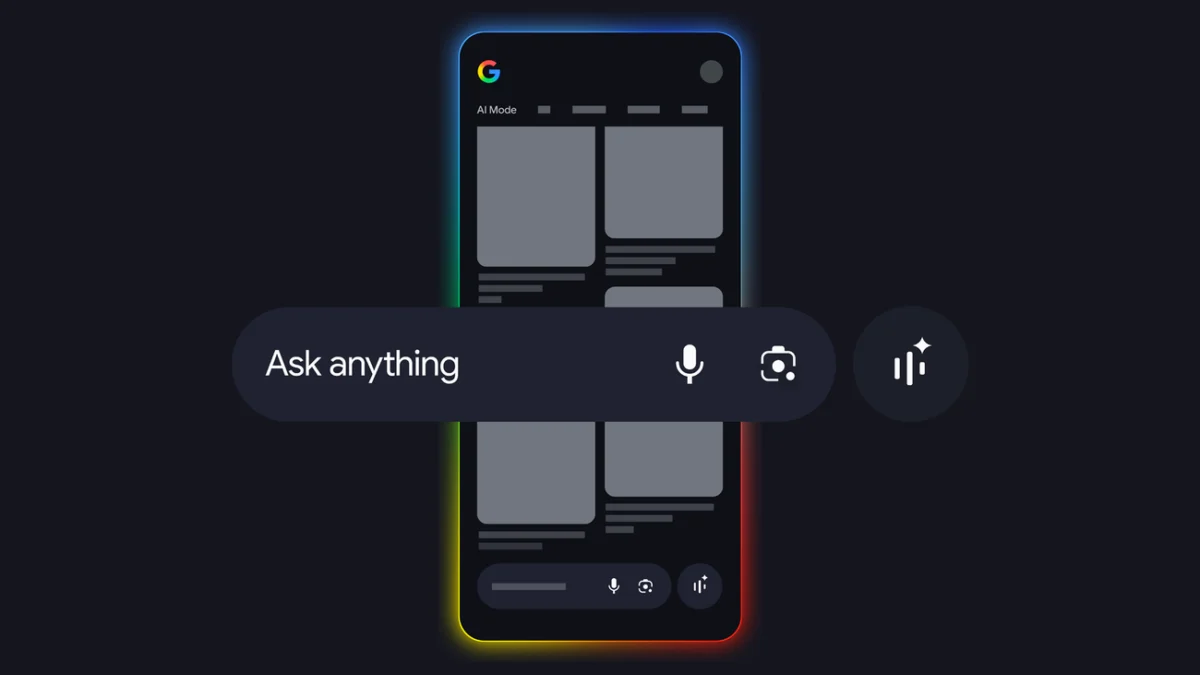

Furthermore, the integration of AI into search—often referred to as the Search Generative Experience (SGE) or AI Mode—is a response to the competitive pressure from Microsoft’s Bing (integrated with OpenAI’s DALL-E and GPT models) and Apple’s "Visual Look Up" feature. By making Circle to Search a seamless part of the Android operating system, Google is attempting to capture "intent" at the moment of discovery, reducing the friction between seeing an item and finding information about it.

Strategic Implications for E-commerce and Retail

The ability to search for an entire outfit or a room’s decor simultaneously has profound implications for the retail sector. Traditionally, the "path to purchase" involved a user seeing an item, attempting to describe it in text, and then browsing through search results that might or might not be relevant. Simultaneous multi-object search collapses this funnel.

For retailers, this technology emphasizes the importance of high-quality product imagery and robust metadata. As Google’s AI becomes better at "fanning out" searches, products that are clearly visible in lifestyle photography—even if they are not the primary focus of the image—become discoverable. This creates a "passive discovery" stream where a user searching for a sofa might end up purchasing the throw pillows or the rug pictured in the same inspirational photo.

Industry analysts suggest that this could lead to a shift in digital advertising spend. If AI can accurately identify and link to products within any image on a user’s screen, the value of "shoppable" content increases. Brands may move toward more integrated placements in high-quality editorial content, knowing that Google’s visual AI can bridge the gap between inspiration and transaction.

Beyond Shopping: Educational and Practical Applications

While the commercial applications of simultaneous visual search are immediate, the technology’s utility extends into education and daily problem-solving. Berrada notes that the goal is to move from asking "What is this one thing?" to "Explain this entire scene to me."

In an educational context, a student can take a photo of a complex math problem or a page from a biology textbook. The AI can identify the various components of a diagram, provide definitions for key terms, and offer a step-by-step explanation of the concepts involved. In a museum setting, a single photo of a gallery wall can trigger a fan-out search that provides biographies for every artist and historical context for every painting in view.

This "scene comprehension" also aids in accessibility. For users with visual impairments or those navigating unfamiliar environments, the ability for an AI to describe a complex scene—identifying obstacles, signs, and landmarks simultaneously—could significantly enhance mobile assistance tools.

Official Responses and Technical Challenges

Internal feedback from Google’s engineering teams suggests that the primary challenge in developing these tools was latency. Performing a dozen searches in the time it usually takes to do one requires immense computational power and highly optimized algorithms. The "AI Mode" in Google Search is designed to manage this load by using the multimodal capabilities of Gemini to filter and prioritize the most helpful results before presenting them to the user.

"Visual search is redefining how we interact with information," Berrada stated during the technical briefing. "Lens should be intelligent enough to understand the ‘why’ behind your search, making it effortless to get help with what you see on your screen, or in the world around you."

However, the rollout of such powerful visual tools also raises questions regarding privacy and copyright. As the AI becomes more adept at "reading" the world through a camera lens, the tech industry continues to grapple with the ethics of data scraping and the rights of content creators whose images power these visual libraries. Google has maintained that its AI tools are designed to drive traffic to the open web by providing helpful links alongside AI-generated summaries, a stance that remains a point of debate among digital publishers.

Broader Impact and the Future of Search

The introduction of multi-object reasoning in Circle to Search and Lens is a clear indicator of where the future of information retrieval lies. We are moving toward an era of "ambient search," where the boundaries between the digital and physical worlds are increasingly blurred. In this future, search is not a destination (a website you visit) but a layer of intelligence that sits on top of every image, video, and physical environment.

As Google continues to refine these models, the expectation is that the "fan-out" technique will become even more sophisticated, eventually incorporating real-time video analysis. This would allow users to point their cameras at a moving scene—such as a street corner or a sporting event—and receive a live, annotated overlay of information.

The current updates to Circle to Search and Google Lens are more than just a convenience for shoppers; they are a fundamental restructuring of how machines process human curiosity. By allowing for the simultaneous exploration of multiple variables within a single visual frame, Google is aligning its search technology with the way the human eye actually perceives the world: as a complex, interconnected tapestry of information rather than a series of isolated objects. For the end user, this means less time spent typing and more time discovering, as the AI takes on the heavy lifting of deconstructing the visual world.