The Evolution of Multimodal Generation: Integrating Personal Intelligence

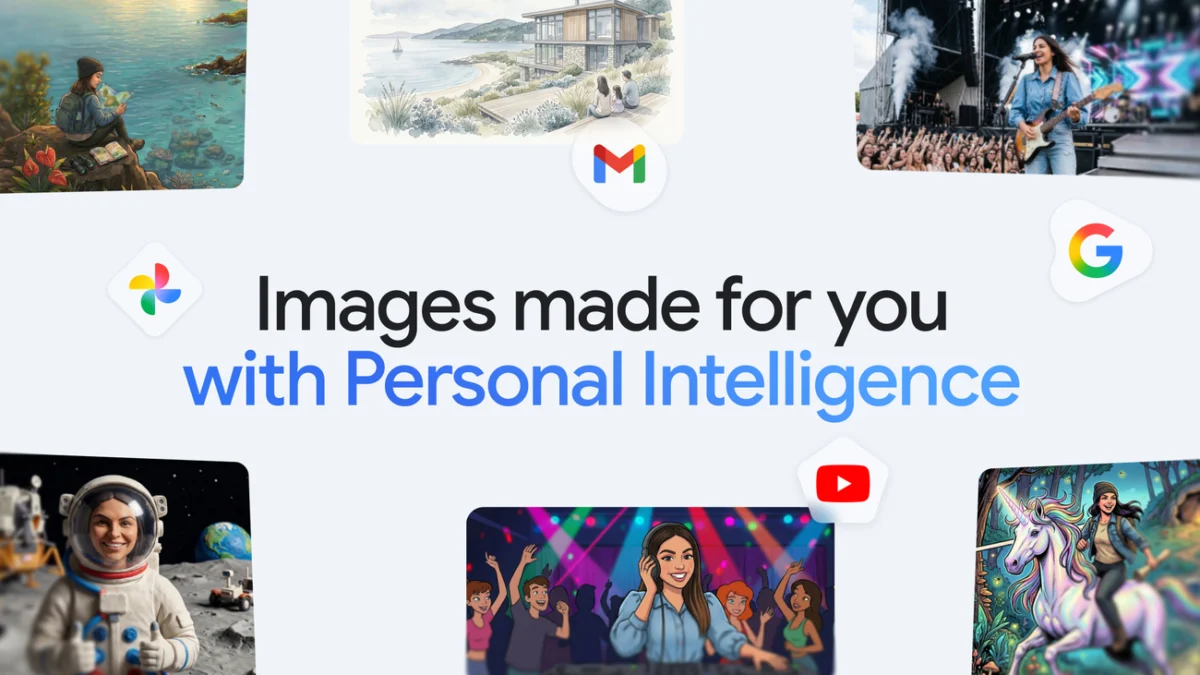

The primary hurdle in the current landscape of artificial intelligence has been the "cold start" problem—the necessity for users to provide extensive context before a model can produce a result that aligns with their vision. Previously, creating an image of oneself in a specific setting required the user to upload reference photos, describe their physical features in detail, and refine the prompt through multiple iterations. Google’s new Personal Intelligence framework aims to eliminate this friction.

By connecting Gemini to the broader Google ecosystem, specifically Google Photos and other linked workspace apps, the AI gains an inherent understanding of the user’s world. When a user asks Gemini to "design a dream house" or "create a picture of my desert island essentials," the Nano Banana 2 model does not pull from a generic database of stock concepts. Instead, it analyzes the user’s historical preferences and saved data to generate results that reflect their specific aesthetic tastes and lifestyle. This integration allows the model to "fill in the blanks" automatically, grounding every creative output in the user’s real-world context.

Leveraging Google Photos for Character Consistency

A standout feature of this update is the ability for users to star in their own AI-generated creations. For years, Google Photos has utilized machine learning to organize and label groups of people and pets within a user’s library. The Gemini app now utilizes these existing labels to guide the image generation process.

Users can issue commands such as "create a claymation image of me and my family enjoying our favorite activity." Because the AI has access to the labeled faces in the user’s Google Photos library, it can maintain character consistency—a notoriously difficult task in generative AI—without requiring the user to download and re-upload files. This functionality extends to various artistic styles, including watercolors, charcoal sketches, and oil paintings. The system effectively transforms a personal photo library into a dynamic asset gallery for creative expression.

Technical Specifications and User Control

To ensure the AI remains a tool for the user rather than an autonomous actor, Google has implemented several layers of creative control. Recognizing that AI may not always select the ideal reference photo on the first attempt, the interface now includes a "Sources" button. This transparency feature allows users to see exactly which image from their library was used as a reference.

If the generated output is unsatisfactory, users can manually override the AI’s choice. By clicking a "+" icon, users can select a different reference photo from Google Photos to provide a new perspective or style. Furthermore, the system supports iterative refinement; users can provide natural language feedback to correct specific details, such as clothing color or background elements, until the image aligns with their expectations.

A Chronology of Google’s AI Integration

The introduction of personalized image generation is the latest milestone in Google’s rapid acceleration of AI development, which began in earnest following the 2023 rebranding of its AI efforts.

- February 2023: Google introduces Bard, its initial response to the rise of large language models (LLMs).

- December 2023: The company unveils Gemini, its most capable multimodal model, designed to handle text, images, video, and audio natively.

- February 2024: Google rebrands Bard as Gemini and introduces the Gemini Advanced subscription, powered by the Ultra 1.0 model.

- May 2024: At the annual Google I/O conference, the company emphasizes "AI for everyone," showcasing deep integration across Android and Workspace.

- Current Update: The rollout of Personal Intelligence and Nano Banana 2 integration marks the transition from "General AI" to "Personalized AI," focusing on the individual user’s data ecosystem.

Supporting Data and Market Context

The move to personalize AI is supported by a growing body of consumer data indicating that users are seeking more utility and less complexity from generative tools. According to industry reports, while the global AI market is projected to reach over $1.8 trillion by 2030, a significant barrier to mainstream adoption remains the "prompting gap"—the difficulty average users face when trying to communicate complex ideas to a machine.

Google’s advantage in this sector is its massive existing user base. Google Photos currently boasts over 1 billion users worldwide, with billions of photos uploaded daily. By tapping into this existing repository, Google provides a level of personalization that competitors like OpenAI or Midjourney cannot easily replicate, as those platforms lack a native, long-term storage ecosystem for personal user data.

Privacy Commitments and Ethical Frameworks

The integration of personal data into generative AI models inevitably raises questions regarding privacy and data security. Google has addressed these concerns by clarifying the boundaries of its model training. The company stated that the Gemini app does not directly train its underlying foundational models on a user’s private Google Photos library.

Instead, the system uses "limited information," such as specific prompts and the resulting model responses, to improve functionality over time. The connection between Gemini and Google Photos remains an opt-in experience. Users must proactively grant permission for the AI to access their library, and these permissions can be revoked at any time through the app’s settings. This "privacy-by-design" approach is intended to mitigate the "creepy factor" associated with AI having deep knowledge of a user’s personal life.

Official Responses and Strategic Vision

Animish Sivaramakrishnan, Group Product Manager for the Gemini App, and David Sharon, Multimodal Generation Lead, emphasized that the goal of this update is to allow users to "spend more time creating and less time explaining." In their official communications, they highlighted that Personal Intelligence makes the app feel "tailored to you, not just a generic tool that works the same for everyone."

Industry analysts suggest this move is a strategic attempt to solidify Gemini as the central "operating system" for a user’s digital life. By making the AI more useful through personalization, Google increases user "stickiness" within its ecosystem, making it harder for competitors to lure users away to standalone AI platforms.

Broader Impact and Future Implications

The rollout of personalized image creation has significant implications for the future of digital media and personal communication. As these tools become more accessible, the barrier between professional-grade graphic design and casual content creation continues to blur.

- Democratization of Creativity: Users without technical design skills can now produce high-quality, personalized artwork for social media, family invitations, or professional presentations.

- The Shift Toward Agentic AI: This update moves Gemini closer to being an "AI agent"—a tool that doesn’t just respond to commands but understands the context and intent behind them based on historical data.

- Competition in the AI Space: Google’s integration of personal data sets a high bar for competitors. It forces other tech giants to consider how their own data silos (such as Apple’s iCloud or Microsoft’s OneDrive) can be leveraged for similar personalized experiences.

Rollout and Eligibility

The new personalized image generation experience is currently rolling out to eligible subscribers in the United States. This includes users signed up for Google AI Plus, Gemini Pro, and Gemini Ultra. While the initial launch is focused on the mobile app experience, Google has confirmed plans to bring these features to Gemini on Chrome for desktops and to a broader global audience in the coming months.

As the feature hits devices over the next several days, the tech community will be watching closely to see how effectively the Nano Banana 2 model handles the nuances of personal identity and whether the privacy safeguards satisfy an increasingly data-conscious public. For now, Google’s message is clear: the future of AI is not just intelligent—it is personal.