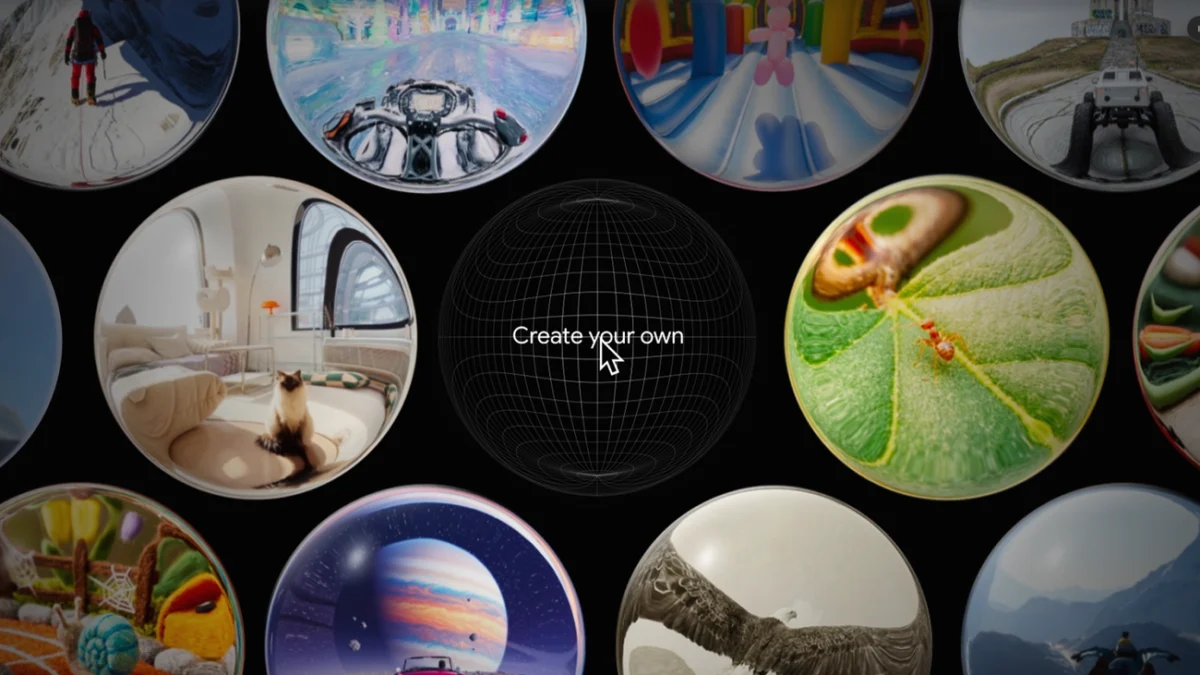

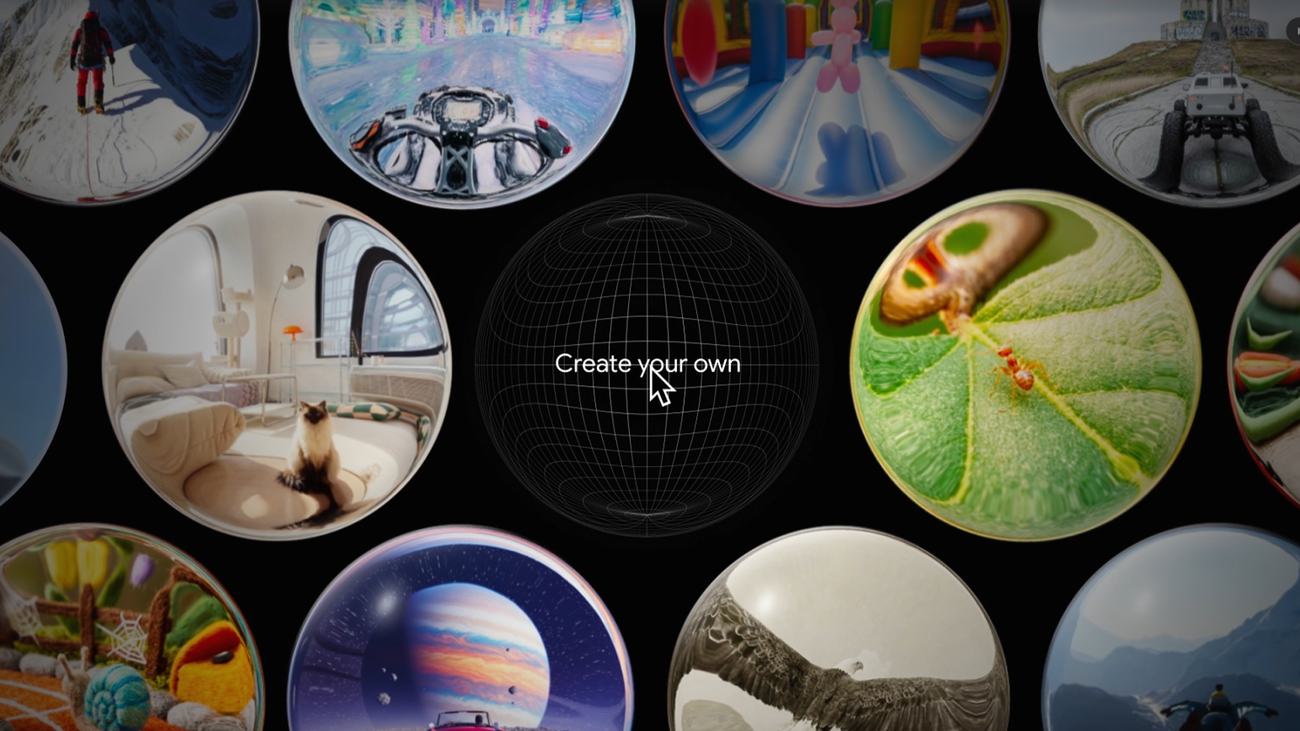

Google DeepMind has officially transitioned its experimental research into a tangible user experience with the rollout of Project Genie, a generative world model that allows users to create, inhabit, and navigate interactive digital environments through simple text and image prompts. Representing a significant leap in the field of generative artificial intelligence, Project Genie moves beyond static image generation or passive video synthesis, offering a foundation for what researchers call "interactive world models." Currently available to Google AI Ultra subscribers in the United States who are over the age of 18, the tool serves as a sandbox for the future of digital storytelling, game design, and synthetic environment simulation.

The platform functions by interpreting natural language descriptions and visual inputs to construct a coherent, navigable space. Unlike traditional video games, which rely on pre-rendered assets and hard-coded physics engines like Unreal Engine or Unity, Project Genie generates its environments and the underlying logic of movement on the fly. This breakthrough is the culmination of years of research into how AI can learn the "laws of physics" and "object permanence" simply by observing large datasets of video footage.

The Technological Foundation of Project Genie

The genesis of Project Genie can be traced back to early 2024, when Google DeepMind researchers published a seminal paper describing a model trained on 200,000 hours of publicly available platformer video game footage. The objective was to create a model that could not only predict the next frame in a video sequence but also understand how a character’s actions—such as jumping, running, or colliding with an object—alter the environment. This led to the development of "latent actions," a system where the AI infers the controls of a world without being explicitly programmed with them.

In its current experimental iteration, Project Genie utilizes a specialized inference model known as Nano Banana Pro. This model is designed to provide real-time previews and low-latency navigation, allowing users to see a representation of their world before committing to a full exploration. By integrating with the Gemini ecosystem, the platform also allows users to refine their creative intent, using large language models to bridge the gap between a vague idea and a technically viable prompt.

Chronology of Development and Availability

The trajectory of Project Genie reflects Google’s broader strategy of integrating advanced DeepMind research into consumer-facing products.

- February 2024: Google DeepMind introduces "Genie" (Generative Interactive Environments) as a research project. The initial focus is on 2D platformer environments, proving that an AI can learn to simulate interactive worlds from video alone.

- Late 2024: The research expands to include 360-degree environments and first-person perspectives, moving the technology closer to a virtual reality or "open world" simulator.

- Early 2025: Project Genie enters its experimental prototype phase for public testing. The rollout is limited to Google AI Ultra subscribers, a premium tier designed for power users and developers who provide feedback to fine-tune the model’s safety and performance.

- Current Phase: The platform is being evaluated for its creative potential, with Google providing specific frameworks to help users maximize the utility of the generative engine.

Strategic Implementation: A Guide to World Building

To facilitate high-quality outputs, Google has outlined a structured approach to prompting the Genie engine. This methodology emphasizes the transition from abstract concepts to sensory-rich, actionable data.

Environmental Granularity and Setting the Scene

The first step in the Genie workflow involves defining the "world model" parameters. Users are encouraged to move beyond simple nouns like "forest" or "city" and instead provide a hierarchy of details. A professional-grade prompt might describe the atmospheric density, weather patterns (such as a "gale-force wind swaying neon-lit trees"), and specific architectural styles. By specifying whether a world is "photo-realistic," "pixelated," or "hand-drawn," the user dictates the aesthetic constraints the AI must follow.

Character Agency and Movement Logic

A unique feature of Project Genie is its ability to map movement styles to character descriptions. Because the model understands latent actions, a "giant pixelated doll" will move with different weight and momentum than a "tiny blue giraffe." Users can further customize these interactions by describing secondary effects—for instance, specifying that a character leaves a trail of frost or emits a puff of smoke upon jumping. This level of detail informs the AI’s "physics" generation, ensuring that the character’s presence feels integrated into the environment.

Visual Grounding through Image Uploads

For users seeking higher consistency, the platform allows for image-to-world generation. This feature enables the AI to use an existing photograph or digital painting as a "seed." The model analyzes the spatial layout of the image to extrapolate a 360-degree environment. Technical requirements for this feature suggest that the character should be centered in the frame with a clearly defined background, allowing the AI to distinguish between the protagonist and the navigable terrain.

Perspective and Navigational Modes

The interactive nature of Project Genie is realized through its toggleable perspectives. Users can switch between a first-person view, which prioritizes immersion and sensory detail, and a third-person view, which is better suited for observing character animations and environmental layouts. This dual-mode exploration is a direct result of the model’s ability to maintain spatial consistency, a historically difficult task for generative video models.

Supporting Data and Industry Context

The rise of world models like Project Genie comes at a time when the generative AI industry is pivoting from content creation to "simulation." According to industry analysts, the market for AI-generated gaming and simulation tools is expected to grow at a compound annual growth rate (CAGR) of over 30% through 2030.

Comparisons are frequently drawn between Project Genie and OpenAI’s Sora. While Sora focuses on high-fidelity video synthesis for cinematic purposes, Project Genie is distinct in its interactivity. Sora produces a "movie," whereas Genie produces a "playground." This distinction is critical for the robotics industry; Google DeepMind has indicated that the technology underlying Genie could eventually be used to train autonomous agents in synthetic environments, allowing robots to learn how to navigate complex physical spaces without the risk of damaging hardware in the real world.

Official Responses and Safety Considerations

While Google has expressed optimism regarding the creative applications of Project Genie, the company remains cautious about its experimental nature. In official documentation, Google notes that "Generative AI is experimental" and that the "Nano Banana Pro" previews are meant to be iterative.

Safety remains a primary concern. To prevent the generation of harmful or copyrighted content, Project Genie includes built-in filters and moderation layers. The decision to limit access to U.S. users over 18 who are part of the Ultra subscription tier suggests a "red-teaming" approach, where a controlled group of users identifies edge cases and potential biases before a global release.

Broader Impact and Implications

The long-term implications of Project Genie extend far beyond casual entertainment. For the video game industry, this technology represents a move toward "zero-code" game development. It allows writers, concept artists, and educators to prototype interactive experiences without the need for extensive programming knowledge.

Furthermore, the ability of an AI to simulate a world based on sensory descriptions has profound implications for cognitive science and AI alignment. By training models to understand the causal relationships in a virtual world—such as knowing that a character cannot walk through a wall—researchers are bringing AI one step closer to "common sense" reasoning.

As Project Genie continues to evolve, it stands as a testament to the shift from AI as a tool for information retrieval to AI as a creator of digital reality. The current prototype serves as an invitation for users to participate in the definition of this new medium, where the only limit to a navigable world is the specificity of the prompt provided by its creator. Through detailed environmental descriptions, unique character movement, and the strategic use of image-based seeds, users are now able to pilot a technology that was, until very recently, confined to the realm of theoretical research.