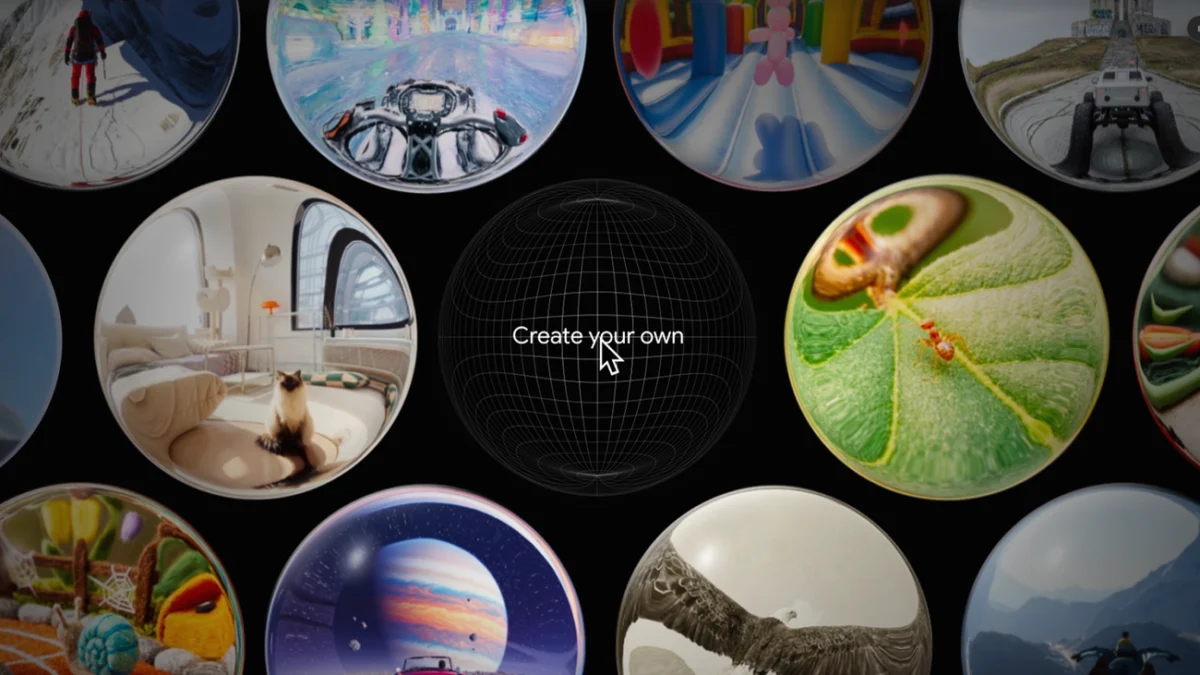

Google DeepMind has officially transitioned its experimental research prototype, Project Genie, into a hands-on experience for a select group of users, marking a significant milestone in the evolution of generative artificial intelligence. By allowing users to create, explore, and remix interactive digital environments through simple text and image prompts, the technology represents a shift from passive media consumption to active, AI-driven world-building. Currently available to Google AI Ultra subscribers in the United States who are over the age of 18, Project Genie is positioned as a foundational "world model" that learns the underlying physics and interactive properties of a scene without traditional manual programming or game engine scaffolding.

The Technological Foundation of Project Genie

At its core, Project Genie is not a traditional game engine like Unreal Engine or Unity; rather, it is a generative model trained on massive datasets of video footage. Unlike previous AI models that were trained on static images or text alone, Project Genie was developed by analyzing hundreds of thousands of hours of gameplay and real-world video. This training allows the model to understand how objects should move and interact—concepts such as gravity, collision, and perspective—without being explicitly programmed with these rules.

The system utilizes a specialized architecture known as a "latent action model." This allows the AI to infer what actions a character can take within a generated environment. When a user inputs a command to move a character, the model predicts what the next frame of the world should look like based on that movement, creating a seamless, real-time interactive experience. This approach bypasses the need for the complex coding typically required to build playable environments, democratizing the process of digital creation for non-technical users.

Strategic Tips for Environment and Character Creation

To maximize the potential of this experimental interface, Google DeepMind has outlined several strategies for effective world-building. These methods focus on the nuances of multimodal prompting—the ability to combine text, images, and specific behavioral instructions to guide the AI’s generative process.

1. Granular Environment Description

The first step in generating a cohesive world involves high-fidelity environmental descriptions. Users are encouraged to go beyond simple nouns like "forest" or "city" and instead provide specific adjectives and atmospheric conditions. For instance, a prompt specifying a "bioluminescent rainforest during a heavy neon-colored downpour" provides the model with more data points for lighting and texture than a generic request.

The model’s ability to render different art styles is also a key feature. Users can dictate whether a world should appear photo-realistic, mimicking a high-budget cinematic production, or adopt stylized aesthetics such as 8-bit pixel art, claymation, or cel-shaded animation. By defining the "feel" of the world through sensory details like wind intensity or the material composition of the terrain—such as a moon made of gelatin—users can push the boundaries of the model’s physics engine.

2. Character Dynamics and Navigation

The character serves as the user’s avatar and the primary means of interacting with the generated physics. Project Genie allows for the creation of virtually any entity, from biological creatures to abstract mechanical objects. Beyond visual appearance, the model responds to descriptions of movement. A user might specify that a character "glides on a trail of frost" or "teleports in short bursts."

These behavioral prompts are critical because they dictate how the world model calculates the character’s impact on the environment. If a character is described as a "giant heavy robot," the model may generate tremors or dust clouds as it moves. This level of reactive detail is what separates Project Genie from static image generators.

3. Image-to-World Synthesis

One of the most powerful features of Project Genie is its ability to transform a single 2D image into a traversable 3D-like space. Users can upload photographs, sketches, or AI-generated art to serve as the "keyframe" for their world. The model then extrapolates the hidden areas of the image—what lies behind a building or around a corner—to create a persistent environment.

For optimal results, technical guidance suggests using images where the intended character is centered and the background is expansive. This provides the AI with enough visual context to define the scale and depth of the world. Once an image is uploaded, users can overlay text prompts to modify the mood, such as turning a bright daytime photo into a "nocturnal, foggy landscape."

4. Semantic Precision and Real-Time Refinement

Project Genie performs best when prompted with direct, action-oriented language. Declarative statements like "The coral reef glows when touched" are more effective than vague or overly poetic descriptions. To assist users who may struggle with prompt engineering, Google has integrated the Gemini AI app into the workflow. Gemini can take a basic idea and rewrite it into a structured prompt that Project Genie can more easily interpret.

Before committing to a full exploration, the system provides a preview via "Nano Banana Pro," a specialized sub-model designed for rapid visualization. This allows creators to see a low-latency version of their world and character, enabling real-time adjustments to navigation and aesthetics before the final environment is rendered.

Chronology of Development

The journey of Project Genie began within the research labs of Google DeepMind, where the team sought to create a "General World Model." In early 2024, the team published initial research papers detailing how an AI could learn to act in a virtual world without being given labels for the actions it was taking.

By late 2025, internal testing expanded, and the model was refined to handle higher resolutions and more complex interactive loops. The current rollout to Google AI Ultra subscribers in early 2026 represents the first time the general public has been granted access to this level of generative interactivity. Google has indicated that this is a phased release, with plans to expand eligibility to other regions and subscription tiers as the infrastructure scales.

Market Context and Competitive Landscape

Project Genie enters a competitive landscape where generative AI is rapidly moving from 2D media to temporal and interactive formats. While OpenAI’s Sora has dominated headlines for its ability to generate high-quality video, Project Genie distinguishes itself by focusing on interactivity. Where Sora produces a non-interactive movie, Genie produces a "playable" space.

Industry analysts suggest that this technology could disrupt several sectors:

- Game Development: Independent creators could use Genie to rapidly prototype levels or environmental concepts without needing a full team of artists and programmers.

- Education: Teachers could generate immersive historical or scientific simulations based on textbook descriptions, allowing students to "walk through" ancient Rome or a human cell.

- Professional Training: Companies could create bespoke simulations for safety or technical training based on photos of their own facilities.

Broader Impact and Ethical Considerations

The introduction of Project Genie brings with it significant implications for digital safety and intellectual property. Because the model can generate virtually any environment based on an image, Google has implemented strict safety filters to prevent the creation of harmful, explicit, or copyrighted content. The 18+ age restriction and the requirement for a premium subscription serve as initial barriers to ensure a controlled environment during the prototype phase.

Furthermore, the "unsupervised" nature of the model’s learning—meaning it learns from video without human-labeled instructions—raises interesting questions about the future of AI. If an AI can learn the laws of physics simply by watching videos, it suggests a path toward more advanced robotics and autonomous systems that can navigate the physical world with a similar "intuitive" understanding.

Future Implications

As Project Genie continues to evolve, the integration of first-person and third-person perspectives marks a move toward more traditional gaming experiences. Users can toggle between these views to better understand the scale and layout of their creations.

Looking ahead, Google DeepMind researchers have hinted that future versions may allow for multi-user worlds, where several people can explore the same AI-generated environment simultaneously. For now, Project Genie remains a powerful tool for individual creativity, offering a glimpse into a future where the barrier between imagining a world and inhabiting it is virtually non-existent. The current prototype phase is expected to yield vast amounts of user data, which Google will use to refine the model’s responsiveness and visual fidelity, potentially leading to a broader release later this year.