Google DeepMind has officially announced the release of Nano Banana 2, a state-of-the-art image generation model engineered to bridge the gap between high-fidelity creative output and rapid processing speeds. Internally designated as Gemini 3.1 Flash Image, the model represents a significant milestone in Google’s generative AI roadmap, integrating the sophisticated reasoning and world knowledge of the "Pro" series with the low-latency architecture of the "Flash" family. This release follows a period of intense competition in the generative media space, as tech giants and independent labs alike strive to balance computational efficiency with visual accuracy.

The rollout of Nano Banana 2 marks the third major iteration in Google’s specialized image generation lineage over the past year. By embedding this model across the Google ecosystem—including the Gemini app, Google Search, and advertising platforms—the company aims to democratize studio-quality creative tools for both casual users and professional developers. The primary value proposition of Nano Banana 2 lies in its ability to follow complex, multi-layered instructions and maintain subject consistency while delivering results at a fraction of the time required by previous high-end models.

The Evolution of Google’s Generative Vision: A Chronology

The development of the Nano Banana series reflects the rapid pace of innovation within Google DeepMind since the consolidation of Google’s AI research arms. To understand the significance of the second iteration, it is necessary to examine the timeline of its predecessors and the market conditions that necessitated its creation.

In August of last year, Google released the original Nano Banana model. It quickly became a viral sensation, praised for its ability to handle text rendering within images and its nuanced understanding of lighting and texture. This initial version focused on accessibility and creative flexibility, establishing a foundation for what users could expect from Google’s native image synthesis.

By November, the company introduced Nano Banana Pro. This model was designed for "production-ready" workflows, offering advanced intelligence and creative control that rivaled industry leaders like Midjourney and DALL-E. However, the Pro model’s high parameter count meant that generation times were longer, making it less ideal for real-time iteration or mobile-first applications.

The launch of Nano Banana 2 today completes the current strategic cycle. By utilizing the Gemini 3.1 Flash architecture, Google DeepMind has optimized the model for speed without sacrificing the "world knowledge" that defined the Pro version. This trajectory indicates a shift in AI development from purely "bigger is better" scaling to architectural efficiency and specialized utility.

Bridging the Gap Between Quality and Latency

In the field of generative AI, "latency" refers to the delay between a user submitting a prompt and the model producing an output. For professional designers and marketing teams, high latency can disrupt the creative flow, making it difficult to perform the rapid A/B testing or iterative editing required in modern workflows. Nano Banana 2 addresses this by utilizing a distilled architecture that prioritizes throughput.

Despite its "Flash" designation, Nano Banana 2 retains the advanced reasoning capabilities of the Gemini 3.1 family. This means the model does not merely generate pixels based on patterns; it understands the semantic relationships between objects in a prompt. For instance, when tasked with creating a "flat lay infographic explaining the water cycle," the model correctly identifies the necessary scientific components—evaporation, condensation, precipitation—and organizes them in a logically coherent visual structure.

Supporting data from early internal testing suggests that Nano Banana 2 can generate high-resolution images significantly faster than the Pro model while maintaining a high "Prompt Adherence Score." This metric is critical for enterprise users who require the AI to follow strict brand guidelines or specific spatial arrangements.

Advanced Creative Control and Subject Consistency

One of the most persistent challenges in AI image generation has been subject consistency—the ability to keep a character or object looking identical across multiple generated frames. Nano Banana 2 introduces enhanced subject preservation features, which are vital for storytelling, comic creation, and brand mascot development.

In the previous generation of models, a user might generate a character in a "farm setting" and then struggle to place that same character in a "city setting" without the AI altering the character’s features. Nano Banana 2 utilizes improved embedding techniques to lock in specific visual traits. This allows creators to build narrative sequences, such as a three-panel story of characters building a treehouse, where the characters’ identities remain stable across different angles and lighting conditions.

Furthermore, the model offers improved "instruction following." This refers to the AI’s ability to interpret nuanced modifiers, such as specific aspect ratios (16:9, 9:16, 1:1), artistic styles (Synthetic Cubism, photorealism, pop art), and localized cultural details. For example, the model can take a sign that reads "Native Wildlife" and accurately translate and re-render the text and imagery for an Indian setting, complete with Hindi script and indigenous flora, all while maintaining the original’s aesthetic quality.

A New Standard for AI Grounding in Visual Media

A standout feature of Nano Banana 2 is its integration with Google Search for "grounding." Grounding is the process of linking AI outputs to verifiable, real-world information to prevent "hallucinations"—instances where the AI generates factually incorrect or nonsensical imagery.

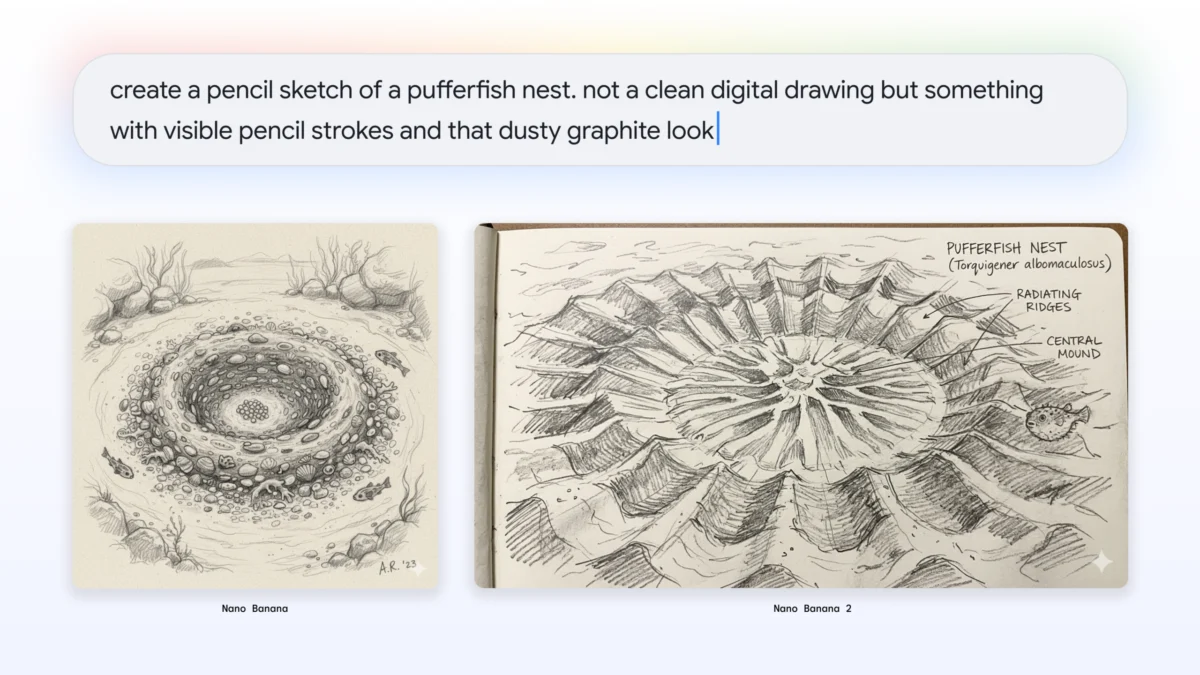

In the context of Nano Banana 2, this means that when a user asks for an image of a specific scientific phenomenon or a historical landmark, the model can reference Google’s vast search index to ensure visual accuracy. A notable application of this is in the "AI Mode" within Google Search. If a user searches for a "pufferfish nest," the model can generate a scientifically accurate diagram based on real-world biological data, rather than a generic or imaginative interpretation.

This capability has profound implications for education and technical communication. By grounding visual generation in factual data, Google is positioning Nano Banana 2 as a tool for information synthesis rather than just artistic expression.

Robust Provenance and the Ethics of Synthetic Content

As the quality of AI-generated imagery approaches photorealism, the risks associated with misinformation and deepfakes have escalated. Google DeepMind has responded to these concerns by integrating robust provenance tools directly into the Nano Banana 2 workflow.

The model utilizes SynthID, a proprietary technology that embeds an imperceptible digital watermark directly into the pixels of generated images. Unlike traditional watermarks, SynthID is resistant to common edits such as cropping, resizing, or color adjustments. According to Google, the SynthID verification feature in the Gemini app has been utilized over 20 million times since its launch in November, indicating a high level of user interest in identifying AI-generated content.

In addition to SynthID, Nano Banana 2 supports C2PA (Coalition for Content Provenance and Authenticity) Content Credentials. This is an industry-standard metadata format that provides a "nutrition label" for digital media, detailing the history of the file and the tools used to create it. By coupling SynthID with C2PA, Google provides a multi-layered approach to transparency, allowing users to verify not just if AI was used, but specifically how it was applied.

Industry Impact and Strategic Implications

The release of Nano Banana 2 is likely to prompt a response from other major players in the AI sector. Companies like OpenAI and Anthropic have also been working on multimodal models that integrate vision and text, but Google’s advantage lies in its massive distribution network. By embedding Nano Banana 2 into Google Ads, for instance, the company provides small business owners with the ability to generate high-end marketing assets instantly, potentially disrupting the traditional stock photography and graphic design markets.

Industry analysts suggest that the "Flash" approach to image generation will become the new standard. As generative AI moves from a novelty to a utility, the demand for speed and cost-effectiveness will outweigh the need for massive, slow-moving models for 90% of use cases.

Naina Raisinghani, Product Manager at Google DeepMind, emphasized that the goal of Nano Banana 2 is to provide the "perfect tool for every workflow." Whether a user needs the maximum factual accuracy of the Pro model or the rapid iteration of the Flash model, the current portfolio covers the full spectrum of creative and professional needs.

Conclusion and Future Outlook

Nano Banana 2 represents a maturation of Google’s AI strategy. By focusing on the intersection of speed, reasoning, and provenance, Google DeepMind is addressing the practical barriers to AI adoption. The model is rolling out today across the Gemini app—featuring new "templates" for easy style selection—and is being integrated into Google Search and creative "Flow" environments.

As users begin to explore the capabilities of Gemini 3.1 Flash Image, the focus will likely shift toward how these models can be further personalized. Future updates may include even deeper integration with user-specific data and more refined controls for video and 3D generation. For now, Nano Banana 2 stands as a testament to the fact that in the world of AI, speed no longer has to come at the expense of intelligence.