Google has officially announced a significant evolution of its visual search capabilities, introducing multi-object image recognition to its Circle to Search feature. This update, which marks a shift from identifying single items to analyzing entire scenes, debuted alongside the launch of the Samsung Galaxy S26 series and the Google Pixel 10. By leveraging the advanced reasoning capabilities of the Gemini 3 model, Circle to Search can now deconstruct complex images, such as a complete fashion outfit or a biodiverse ecosystem, providing users with a comprehensive breakdown of every component within a single query. Harsh Kharbanda, Director of Product Management for Search at Google, characterized the update as a transformative step for mobile search, moving beyond simple identification toward agentic problem-solving.

The rollout signifies a deepening of the strategic partnership between Google and Samsung, while also showcasing the hardware-software synergy of the new Pixel 10 flagship. While initial availability is restricted to these premium devices, Google has confirmed that the feature will expand to a broader range of Android smartphones in the coming months. This development arrives at a time when visual search is becoming a cornerstone of mobile interaction, with billions of queries already processed through the Circle to Search interface since its inception in early 2024.

The Evolution of Visual Search: From Text to Context

The introduction of multi-object search represents a logical progression in Google’s decades-long effort to index the world’s information. The journey began with text-based queries, evolved into Google Lens for individual object recognition, and culminated in the launch of Circle to Search on the Galaxy S24 series. Historically, visual search required users to isolate a single item—a specific pair of shoes or a unique landmark—to receive accurate results. If a user wanted to identify multiple items in a room or an outfit, they were forced to perform several consecutive searches, a process that was both time-consuming and prone to friction.

The current update removes these barriers by allowing for holistic image analysis. Instead of circling a single lamp in a living room, a user can now circle an entire interior design layout. The AI then identifies the furniture, the color palette, and the specific aesthetic style, providing a "mood board" of information and shopping links. This transition from "what is this?" to "what is all of this?" reflects a broader trend in the tech industry toward multimodal AI, where systems process text, images, and context simultaneously to mirror human perception.

Technical Architecture: Gemini 3 and Agentic Reasoning

At the core of this update is Gemini 3, Google’s latest iteration of its generative AI model. Unlike previous versions that relied on simple pattern matching, Gemini 3 utilizes what Google describes as "agentic planning" and reasoning. When a user circles multiple objects, the AI does not just run a single search; it creates a multi-step execution plan. It identifies the most relevant segments of the image, crops them internally, and executes simultaneous visual queries.

A key technical component of this process is the "visual query fan-out technique." This method allows the system to branch out from a single user action into dozens of background searches. For example, if a user circles a photograph of a coral reef, the AI identifies various species of fish, such as the Honeycomb Filefish and the Moon Jellyfish. It then cross-references these findings with ecological data to explain how these species coexist. The final output is not just a list of names, but a synthesized report that includes AI Overviews, relevant web links, and high-resolution imagery. This level of reasoning requires significant on-device processing power and cloud-based AI infrastructure, explaining why the feature is initially exclusive to high-end chips found in the Galaxy S26 and Pixel 10.

Transformative Impact on Social Commerce and Fashion

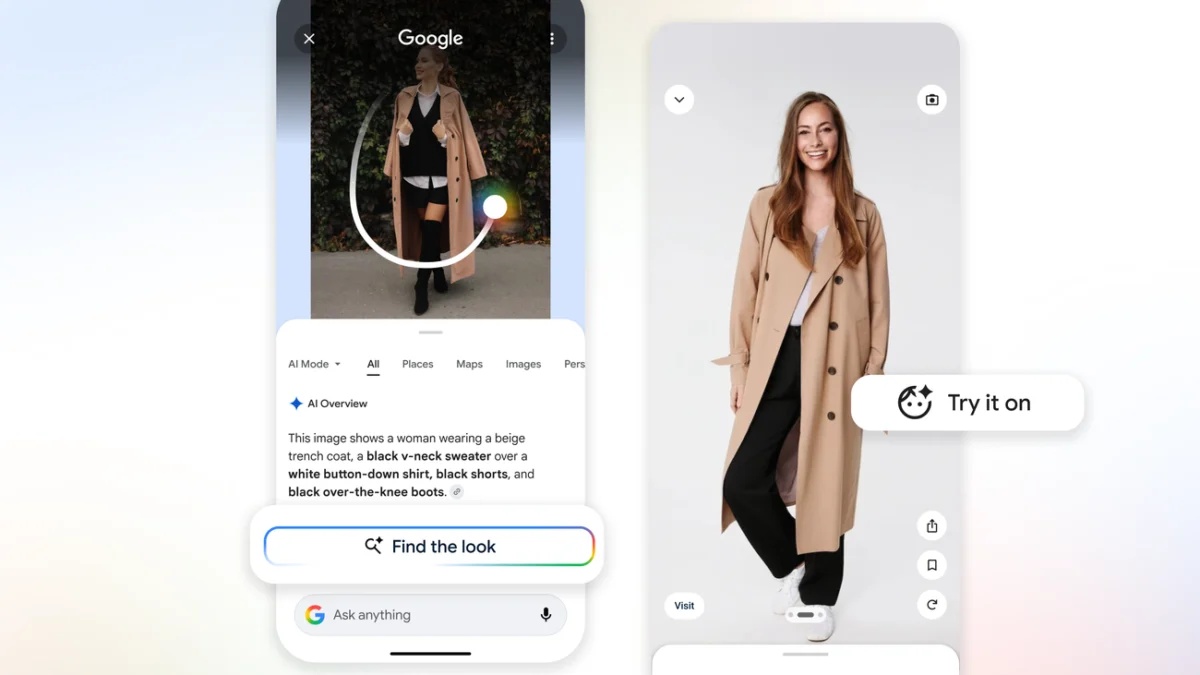

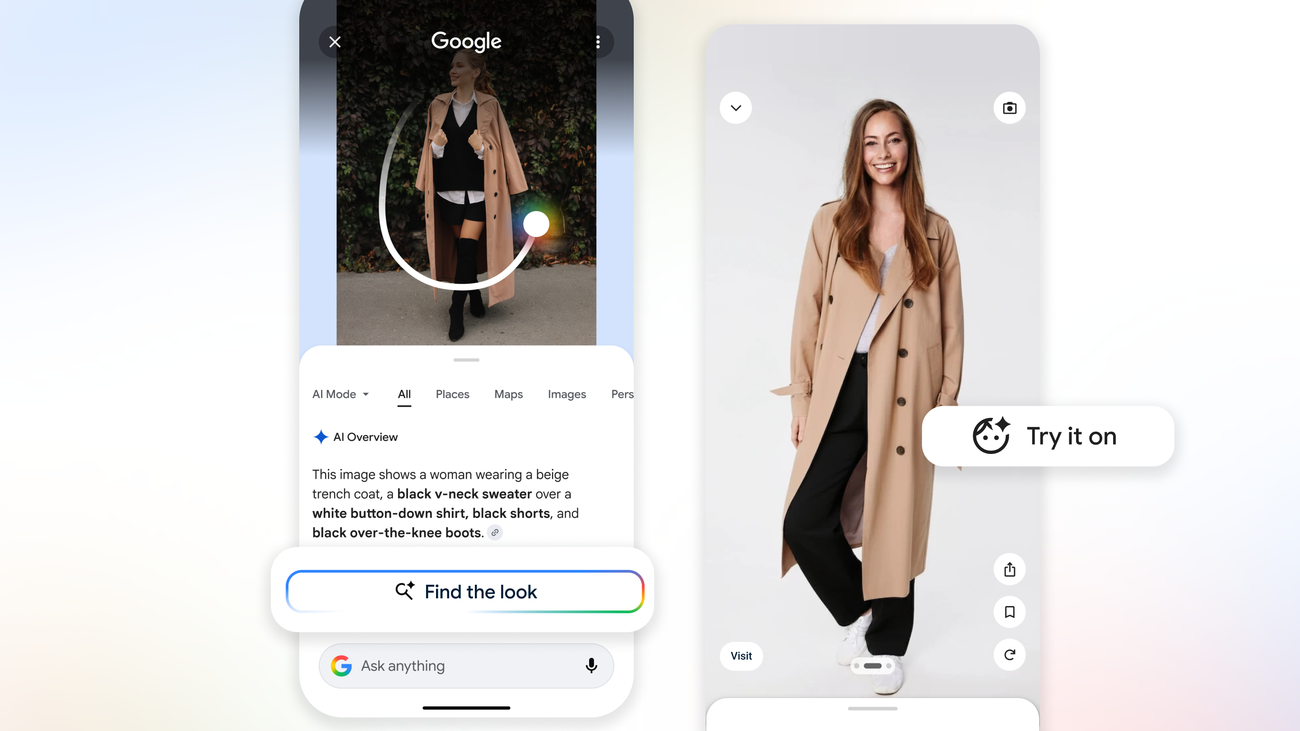

Fashion and shopping have consistently ranked among the most popular use cases for Circle to Search. The new update introduces a "Find the Look" capability specifically designed to capitalize on the rise of social commerce. When a user encounters an outfit on a social media platform like Instagram or TikTok, they can now circle the entire person to deconstruct the ensemble. The AI identifies the shirt, trousers, shoes, and accessories individually, providing similar product listings for each.

To further bridge the gap between discovery and purchase, Google has integrated its virtual "Try On" technology directly into the Circle to Search interface. In supported regions, users can select an identified item and immediately see how it would look on various body types through a virtual dressing room. This integration is expected to have a profound impact on the e-commerce ecosystem. By providing more visual results from a single search, Google is creating new avenues for merchants and small businesses to appear in search results, even if they are not the original brand featured in the user’s image.

Chronology of Development and Market Context

The development of Circle to Search has moved at an aggressive pace since its debut. In January 2024, the feature was launched as a collaborative effort between Google and Samsung to provide a more intuitive way to search without switching apps. By mid-2024, Google had integrated "multisearch," allowing users to add text queries to their visual circles (e.g., circling a plant and typing "care instructions").

The late 2024 and early 2025 period saw the refinement of the Gemini models to handle more complex visual data. The announcement of the Galaxy S26 and Pixel 10 in early 2025 served as the launchpad for the "agentic" version of the tool. Industry analysts suggest that this rapid iteration is a response to increasing competition in the AI space, particularly from Apple’s "Visual Intelligence" features and specialized shopping AI tools. By embedding these capabilities directly into the Android operating system, Google aims to maintain its dominance in the search market by making search an invisible, integrated part of the mobile experience.

Industry Reactions and Strategic Implications

The tech industry has largely viewed the multi-object update as a move to solidify Android’s lead in the "AI Phone" era. Analysts from major research firms note that while many AI features feel like novelties, Circle to Search addresses a genuine pain point in mobile navigation. By reducing the number of steps required to gather information, Google is increasing user engagement within its ecosystem.

However, the move also raises questions regarding the future of the open web and digital advertising. As Google’s AI Overviews provide more direct answers and curated shopping lists within the search interface, there is ongoing debate about how this will affect click-through rates for independent websites. Google has addressed these concerns by stating that the feature includes "links out to the web to dive deeper," suggesting that the goal is to act as a more efficient portal rather than a closed garden.

From a merchant perspective, the "visual query fan-out" represents a significant opportunity. Brands that optimize their product imagery for visual search are likely to see a surge in discovery. As the AI becomes better at identifying textures, patterns, and silhouettes, the importance of high-quality, descriptive metadata for online products will only increase.

Broader Implications for the Future of Mobile Interaction

The shift toward multi-object search is more than just a software update; it is a preview of a future where the smartphone camera and screen act as a continuous interface for the physical and digital worlds. As wearable technology, such as augmented reality (AR) glasses, becomes more prevalent, the logic behind Circle to Search is expected to migrate to "always-on" visual assistants. The ability to glance at a scene and receive an instantaneous, multi-layered breakdown of information is the ultimate goal of the "Search Anywhere" philosophy.

For now, the focus remains on the smartphone. The rollout on the Samsung Galaxy S26 and Pixel 10 serves as a benchmark for what premium Android devices can achieve. As the technology matures and the computational requirements are optimized, multi-object search will likely become a standard feature across the entire Android portfolio, fundamentally changing how hundreds of millions of people interact with the content on their screens.

In summary, the latest update to Circle to Search represents a milestone in the integration of generative AI and visual discovery. By combining the reasoning of Gemini 3 with a user-friendly gesture-based interface, Google has moved the needle on what is possible in mobile search. Whether users are identifying marine life, shopping for the latest trends, or designing their homes, they now have a tool that can see the whole picture, providing a more comprehensive and intuitive way to explore the world around them.