The Data Bottleneck in Global Conservation

For decades, wildlife monitoring relied on physical tracks, scat analysis, or expensive aerial surveys. The advent of the camera trap—a rugged, motion-activated device—revolutionized the field by providing a non-invasive way to observe animals in their natural habitats without human interference. However, this technological leap created an unforeseen logistical crisis. A single study can deploy hundreds of cameras, each producing tens of thousands of images over several months. Many of these frames are "false triggers" caused by moving vegetation or shadows, while others contain common species that, while important, do not require the immediate attention of a senior biologist.

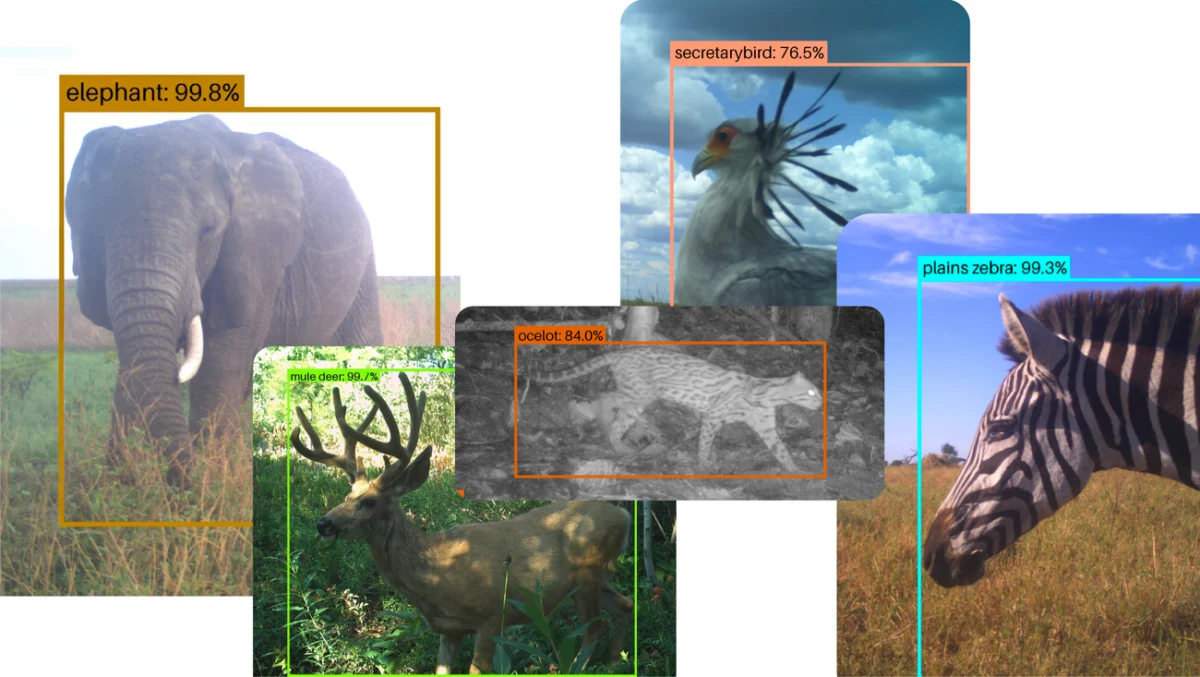

Before the integration of advanced machine learning tools like SpeciesNet, human researchers or volunteers had to manually review every frame. This process was not only prone to fatigue-related errors but also created a multi-year backlog of data. In many cases, by the time a researcher identified a decline in a specific population, the data was already several years old, making it difficult to implement timely conservation interventions. SpeciesNet addresses this "big data" problem by employing deep learning architectures capable of recognizing animals under challenging conditions, including low light, heavy foliage, and obscured angles.

Chronology of SpeciesNet: From Proprietary Research to Open-Source Utility

The development of SpeciesNet represents a multi-year effort to democratize high-end technology for the public good. The project’s timeline reflects a transition from internal experimentation to a global infrastructure for conservation:

- 2019: Google Research begins collaborating with the Wildlife Insights consortium—a partnership including Conservation International, the Smithsonian National Zoo and Conservation Biology Institute, WWF, and the Wildlife Conservation Society. The goal was to create a centralized platform where AI could assist in processing camera trap data.

- 2019–2022: The model undergoes rigorous training on millions of labeled images. During this period, it is integrated into the Wildlife Insights platform, where it begins assisting major international NGOs in managing their vast image repositories.

- Late 2023: Recognizing the need for local researchers to have more control over their data and the ability to run models offline or on their own servers, Google officially open-sources SpeciesNet. This allows developers to download the model code from repositories like GitHub and adapt it to specific regional needs.

- 2024–2025: The model sees widespread adoption by state agencies and local research institutes. Its one-year anniversary as an open-source tool reveals a diverse ecosystem of users, from government departments in North America to grassroots conservation networks in the Amazon.

Case Study: Analyzing Decades of Data in the Serengeti

In Tanzania’s Serengeti National Park, the Snapshot Serengeti project stands as one of the world’s most significant long-term camera trap studies. Since 2010, the project has maintained a vast grid of cameras to monitor how species interact across the landscape. While the project initially relied on a massive network of online citizen scientists to tag images, the sheer volume of data eventually outpaced the capacity of even the most dedicated volunteers.

Todd Michael Anderson, a project leader at North Carolina’s Wake Forest University, recently utilized SpeciesNet to address a backlog of 11 million photographs. In a feat of computational efficiency that would have been impossible a decade ago, the AI processed decades’ worth of fauna data in just a few days. This rapid analysis allows the Snapshot Serengeti team to gain a near-real-time understanding of animal abundance and behavior in one of Africa’s most biodiverse regions. The ability to compare current wildlife trends against a ten-year baseline is critical for identifying how climate change and human encroachment are altering the migratory patterns of the Serengeti’s iconic megafauna.

Behavioral Shifts and Biodiversity in South America

The application of SpeciesNet in Colombia offers a glimpse into how AI can uncover subtle ecological shifts. The Humboldt Institute, a long-term collaborator, has integrated the model into its national monitoring efforts. Recently, the institute launched "Red Otus," a comprehensive network of camera traps spanning both public and private lands across Colombia’s varied ecosystems, including the Amazon Rainforest.

Analysis of tens of thousands of images via SpeciesNet has revealed alarming but vital behavioral changes in local wildlife. Data suggests that certain mammals are becoming increasingly nocturnal, a phenomenon known as "diel activity shifting." Researchers hypothesize that this is a survival strategy to avoid human activity and threats that occur during daylight hours. Furthermore, the AI has helped track changes in bird migration timing and daily activity patterns. In developed areas, birds have been observed appearing later in the morning, potentially as a tactic to avoid predators that are more active near human settlements. These insights are instrumental for Colombian policymakers as they design protected corridors and land-use regulations.

Integration into North American State Resource Management

In the United States, the Idaho Department of Fish and Game (IDFG) exemplifies how SpeciesNet is being used to modernize state-level wildlife management. While southern Idaho’s open terrain allows for traditional aerial surveys, the northern part of the state is characterized by dense, rugged forests where helicopters are less effective. To manage these areas, the IDFG deploys hundreds of camera traps to monitor populations of black bears, coyotes, mule deer, and elk.

The agency collects millions of images annually. By using SpeciesNet to pre-sort these images by species, the IDFG has significantly reduced the administrative burden on its human experts. Biologists still conduct a final review to ensure 100% accuracy for sensitive data, but the AI handles the "heavy lifting" of filtering out empty frames and identifying common species. This hybrid approach—combining AI speed with human expertise—ensures that the state’s wildlife management decisions are based on the most current data available, which is essential for setting hunting quotas and habitat restoration priorities.

Localization and Endemic Species in Australia

The open-source nature of SpeciesNet has been particularly beneficial for the Wildlife Observatory of Australia (WildObs). Australia is home to a high percentage of endemic species—animals found nowhere else on Earth—many of which were not represented in the original global training set of SpeciesNet. Because the model is open-source, WildObs researchers were able to take the foundational architecture of SpeciesNet and "fine-tune" it using local datasets.

This localized version of the model can now identify specific Australian fauna, such as the red-legged pademelon and the endangered southern cassowary. During the Australian springtime of 2025, the model successfully identified cassowaries engaging in "midday strolls" and interacting with camera equipment. For Australian conservationists, the ability to customize an world-class AI model to recognize rare, regional species is a game-changer for protecting the continent’s unique evolutionary heritage.

Technical Analysis and Future Implications

The success of SpeciesNet lies in its robustness. Unlike earlier iterations of computer vision models that required clear, centered subjects, SpeciesNet is trained to recognize animals from various angles and in varying lighting conditions. Its ability to identify a species even when only a tail, a limb, or a blurred profile is visible drastically reduces the rate of "unidentified" frames.

From a broader perspective, the open-sourcing of SpeciesNet signals a shift in the role of big tech in environmental science. By providing the tools for free, Google and its partners are lowering the barrier to entry for conservation efforts in developing nations, where the cost of proprietary software or high-level data scientists can be prohibitive. The implications for policy are profound: as AI makes it easier to quantify biodiversity loss or recovery, international treaties and national laws can be enforced with greater empirical backing.

As the model continues to evolve, the next frontier for SpeciesNet involves real-time processing. Future iterations may be deployed on "edge" devices—cameras with built-in AI chips that can process images instantly and transmit alerts when a rare species or a poacher is detected. For now, the one-year milestone of SpeciesNet’s open-source journey stands as a testament to the power of collaborative technology in the fight to preserve the natural world. Through the lens of millions of camera traps, and with the analytical power of AI, the hidden lives of the world’s wildlife are finally being brought into focus, providing the data necessary to ensure their survival for generations to come.