Google has officially announced a significant expansion of its real-time translation capabilities, bringing the "Live Translate with headphones" feature to the iOS platform and extending its geographic reach to several key international markets. Previously a centerpiece of the Android ecosystem and often associated with Google’s proprietary Pixel Buds hardware, this feature is now accessible to iPhone users and compatible with any pair of headphones. The rollout includes enhanced support for users in France, Germany, Italy, Japan, Spain, Thailand, and the United Kingdom, marking a pivotal step in Google’s mission to leverage artificial intelligence (AI) to bridge global communication gaps. By supporting over 70 languages, the update aims to provide seamless, near-instantaneous verbal interpretation for travelers, international business professionals, and language learners alike.

The Evolution of Real-Time Interpretation Technology

The introduction of Live Translate on iOS represents a strategic shift for Google. For years, real-time translation was marketed as a "hero feature" for the Pixel smartphone line and the Pixel Buds. By decoupling the software from specific hardware requirements, Google is transitioning toward a platform-agnostic model, prioritizing the ubiquity of its Translate service over hardware exclusivity. This move acknowledges the reality of a multi-platform world where users frequently mix and match devices, such as using an iPhone paired with third-party noise-canceling headphones.

The technical foundation of this feature relies on the integration of several sophisticated AI processes: Speech-to-Text (STT), Neural Machine Translation (NMT), and Text-to-Speech (TTS). When a user activates Live Translate, the microphone on the connected headphones or the smartphone captures spoken dialogue. This audio is processed by Google’s neural networks to identify the language and intent, translated into the target language with attention to syntax and context, and then read back into the user’s ears via the headphones. The latest updates leverage Google’s "Gemini" era advancements, which have significantly reduced latency—the delay between the speaker finishing a sentence and the listener hearing the translation.

Strategic Geographic Expansion and Market Context

The selection of France, Germany, Italy, Japan, Spain, Thailand, and the U.K. for this expansion is not incidental. According to data from the United Nations World Tourism Organization (UNWTO), these nations consistently rank among the most visited destinations globally. France, Spain, and Italy remain the top European hubs for international arrivals, while Japan and Thailand represent the primary gateways for tourism in East and Southeast Asia.

By targeting these specific regions, Google is positioning its translation tools to serve the highest concentrations of cross-border interactions. In Japan, for instance, the complexity of the writing system and the linguistic distance from Western languages create a high demand for reliable, real-time audio translation. Similarly, in the U.K. and Germany, which serve as major global financial and industrial hubs, the tool provides a bridge for professional environments where nuance and speed are critical.

A Chronology of Google Translate’s Milestones

To understand the significance of the iOS launch, it is necessary to examine the timeline of Google’s translation advancements:

- 2006: Google Translate launches as a statistical machine translation service, initially relying on United Nations and European Parliament transcripts to "learn" languages.

- 2016: The company transitions to Google Neural Machine Translation (GNMT), which looks at whole sentences rather than just pieces of words, drastically improving accuracy and fluency.

- 2017: Google introduces the first-generation Pixel Buds, featuring "exclusive" real-time translation. This required a tethered connection to a Google Pixel phone.

- 2019-2021: The "Interpreter Mode" is launched for Google Assistant, and Live Translate becomes a system-level feature on Pixel 6 devices, powered by the custom Tensor chip.

- 2023: Google begins integrating Large Language Model (LLM) capabilities into Translate, allowing for better handling of idioms and regional dialects.

- Present Day: The democratization of the feature occurs with the iOS rollout and the removal of proprietary headphone requirements, allowing any Bluetooth or wired headset to function as a translation device.

Technical Specifications and User Integration

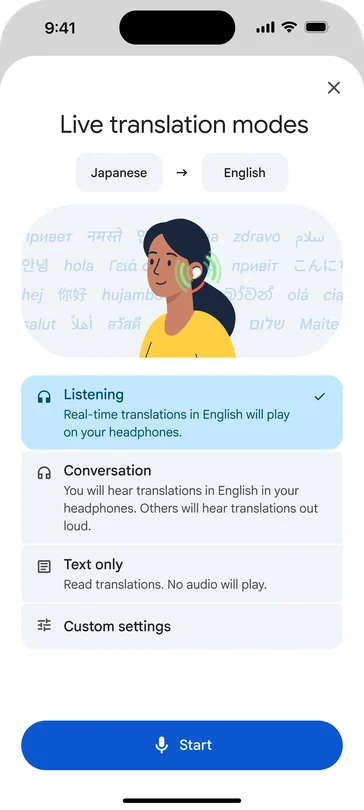

The updated Live Translate feature is housed within the standard Google Translate application. To utilize the function, users must ensure their app is updated to the latest version available on the Apple App Store or Google Play Store. The workflow is designed for simplicity: once headphones are connected—whether they are Apple AirPods, Sony WH-1000XM series, or basic wired earbuds—the user selects the "Live Translate" option within the app.

The software offers two primary modes of interaction:

- Conversation Mode: The screen is split between two languages. Each participant taps their respective microphone icon to speak. The translated text appears on the screen for the interlocutor to read, while the audio is played back through the headphones for the user.

- Continuous Interpretation: Designed for listening to a lecture or a one-way speech, where the app provides a steady stream of translated audio directly into the user’s ears.

Supporting over 70 languages means that the system can handle thousands of potential language pairs. While major languages like English, Mandarin, Spanish, and French have the highest levels of accuracy due to the volume of training data available, Google has continued to add "low-resource" languages, ensuring that the tool remains useful in less globally dominant linguistic regions.

Industry Implications and Competitive Landscape

The expansion of Google’s Live Translate directly challenges Apple’s native "Translate" app, which was introduced with iOS 14. While Apple’s solution offers deep system integration and a focus on on-device privacy, Google’s version benefits from a much larger linguistic database and nearly two decades of machine learning refinement. By offering a superior translation experience on Apple’s own hardware, Google aims to maintain its dominance in the AI services sector.

Industry analysts suggest that this move is also a preemptive strike against specialized translation hardware. Companies like Timekettle and Waverly Labs have carved out a niche by selling dedicated translation earbuds. Google’s software-first approach renders these specialized devices less necessary for the average consumer, as the functionality is now bundled into the smartphone and headphones they already own.

Furthermore, the integration of Gemini AI capabilities into the translation pipeline suggests that Google is moving toward "context-aware" translation. Traditional translation often fails to account for the setting—whether a user is in a pharmacy, a boardroom, or a casual cafe. Future iterations of Live Translate are expected to use environmental cues to provide more relevant vocabulary choices, further narrowing the gap between human interpreters and AI.

Official Responses and User Experience Expectations

While Google has not released specific internal metrics regarding the iOS beta testing phase, early feedback from the developer community suggests that the performance on the iPhone 15 and 16 series is comparable to that of the Pixel 9. This is largely due to the high-performance neural engines found in modern mobile processors, which can handle the heavy lifting of speech processing with minimal power consumption.

Language experts have noted that while Live Translate is a revolutionary tool for "survival communication" and basic information exchange, it does not yet replace the need for professional human interpreters in legal, medical, or high-stakes diplomatic settings. The nuances of cultural etiquette and non-verbal communication remain outside the current scope of AI. However, for the 1.5 billion people who travel internationally each year, the ability to navigate a foreign city or order a meal in a local tongue via their existing headphones is a transformative utility.

Privacy, Data Security, and Ethical Considerations

With the expansion of live audio processing, questions regarding data privacy remain at the forefront. Google has stated that audio processed during Live Translate sessions is used to provide the translation in real-time. On many modern devices, a significant portion of the processing happens on-device to reduce latency and enhance privacy. However, for certain complex language pairs or older hardware, data may be processed in the cloud.

Google’s privacy policy for Translate emphasizes that users have control over their activity history. Nevertheless, as the tool becomes more common in public spaces, ethical questions regarding the recording of third parties without explicit consent may arise. As AI-driven translation becomes a standard feature of mobile operating systems, the industry may see a push for "transparency indicators," such as on-screen alerts or audible chimes that notify all parties when a live translation session is active.

Looking Ahead: The Future of Global Communication

The rollout of Live Translate to iOS and the expansion into seven major international markets is a clear indicator that the "language barrier" is becoming increasingly porous. As Google continues to refine its algorithms, the goal is to reach a state of "natural transparency," where the technology disappears, and two people speaking different languages can converse as if they were speaking the same one.

For the travel industry, this technology could lead to a decrease in "language anxiety," potentially boosting tourism in regions that were previously avoided by non-speakers. For the global workforce, it facilitates a more inclusive environment where regional offices can collaborate more effectively. As Google Translate moves beyond the screen and into the ear, it redefines the smartphone not just as a communication device, but as a universal bridge for human connection. The "Live Translate with headphones" update is now available for download and activation for users across the newly supported regions on both the App Store and Google Play.